When you first dive into the swamp of Explainable AI (XAI), the sheer number of methods can be overwhelming. To

Tag: Explainable AI

Taxonomy of Explainable AI (XAI) for Computer Vision

Occlusion

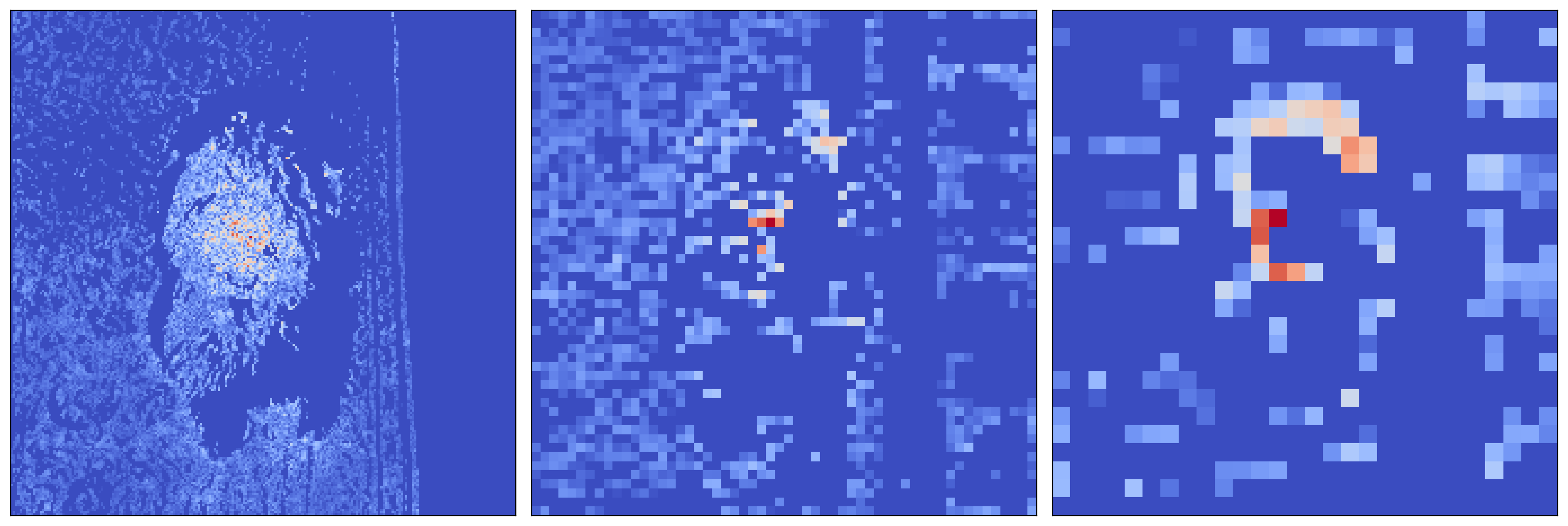

Permutation-based Saliency Maps for Computer Vision

Deep learning models rely on certain features in an image to make decisions. These are aspects like the colour of

Guided Backpropagation from Scratch with PyTorch Hooks

Learn to interpret computer vision models by visualising the gradients of the input image and intermediate layers

Convolutional neural networks (CNNs) make decisions using complex feature hierarchies. It is difficult to unveil these using methods like occlusion,

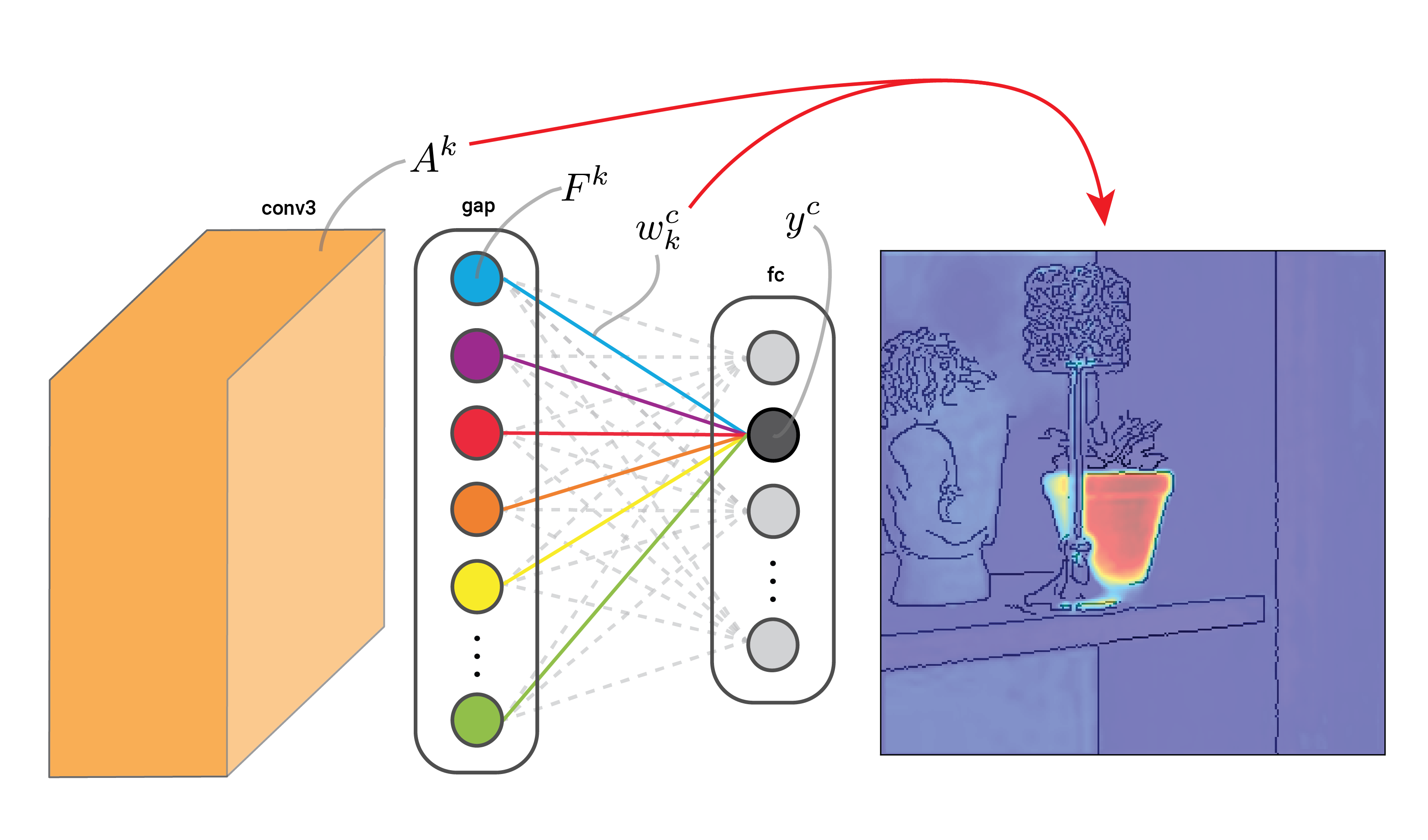

Class Activation Maps (CAMs) from Scratch

How global average pooling layers lead to intrinsically interpretable neural networks

Interpretability by design is usually a conscientious effort. Researchers will think of new architectures or adaptions to existing ones that

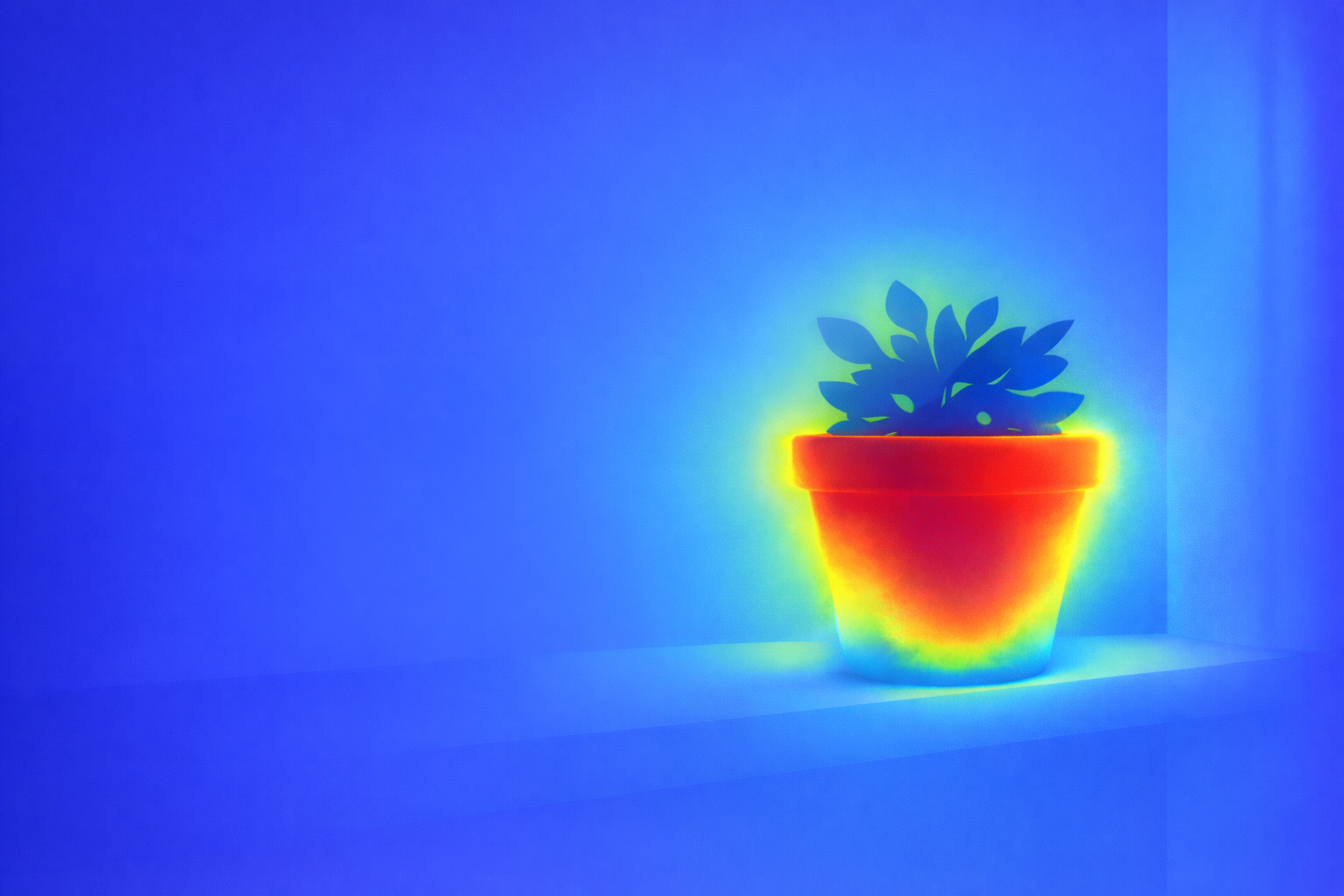

Grad-CAM for Explaining Computer Vision Models

Understanding the math, intuition and Python code for Gradient-weighted Class Activation Mapping (Grad-CAM)

Most of the gradient-based methods we will talk about propagate gradients all the way back to the input image. These

Explainable AI for Computer Vision: Free Python Course

Welcome to Explainable AI for Computer Vision — a free course that covers the theory and Python code for XAI

The Importance of Explainable AI (XAI) in Computer Vision

The 7 benefits of XAI for CV: uncovering systematic bias, explaining edge cases, improving fairness, safety and model efficiency, enhancing user interaction and building trust in machine learning

Like many millennials, I have satisfied my need to nurture something with an unreasonably large pot plant collection. So much

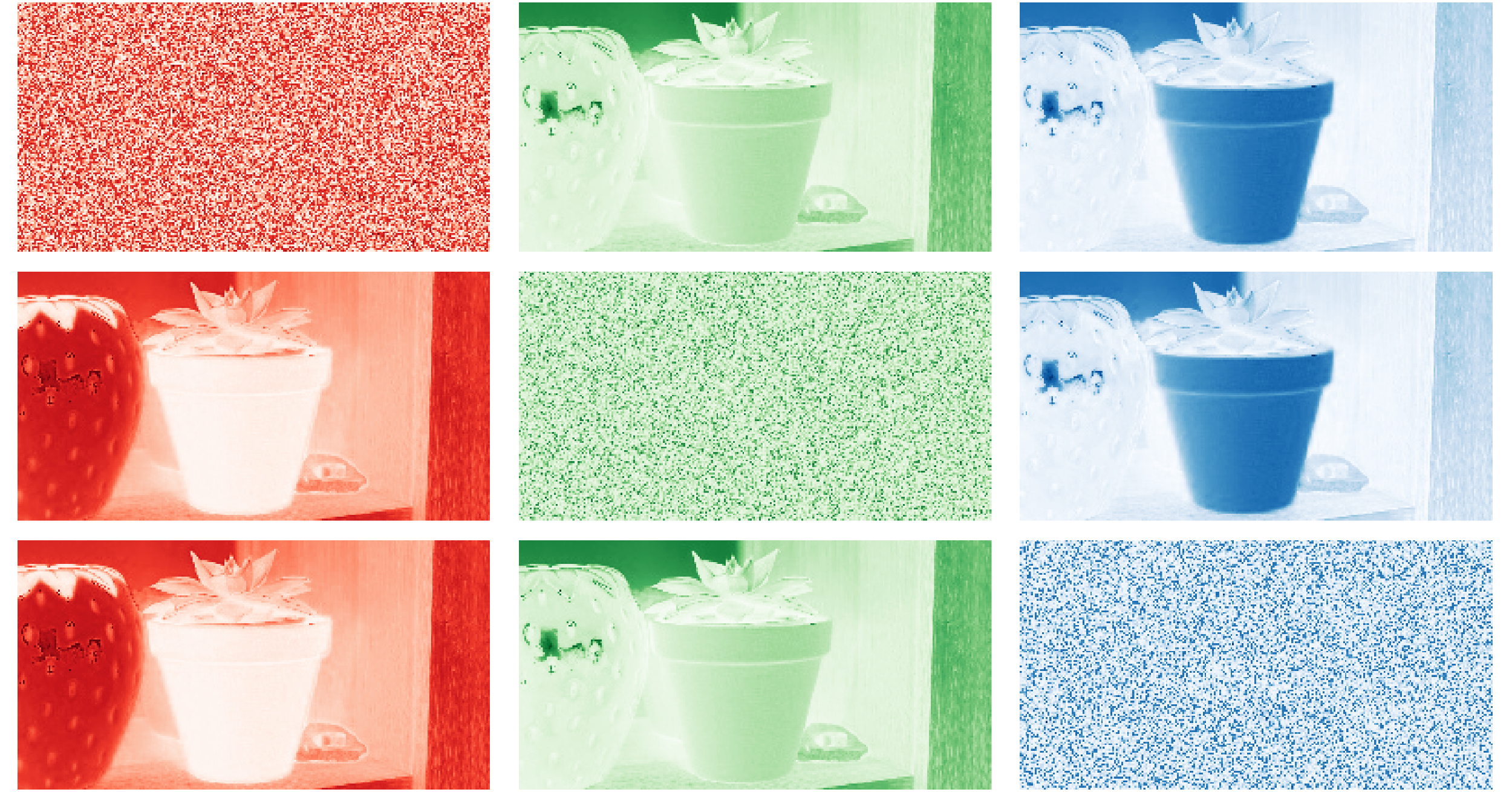

Permutation Channel Importance

A global interpretability method for understanding which channels in a computer vision model are most important

Images are a 2D grid of pixels and, in normal images, each pixel will have three values. We call these